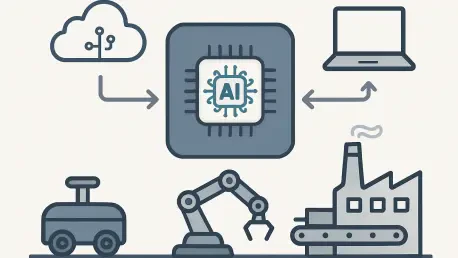

The ability of industrial systems to process large volumes of data directly at the point of production is increasingly defining the performance ceiling of modern manufacturing and automation environments. Edge AI has emerged as a core architectural shift, enabling localized intelligence and allowing industries to move from centralized processing models toward real-time decision-making closer to sensors, machines, and operational assets.

Research highlights that this distributed approach is not merely incremental optimization but a structural transformation in how industrial systems operate, with edge AI enabling low-latency processing and reducing dependence on distant cloud infrastructure for time-critical decisions. This shift is especially relevant in industrial environments where real-time inference is required for automation, predictive maintenance, and robotics, as delays introduced by centralized processing can directly impact efficiency and operational safety. As a result, modern industrial architectures are increasingly designed around hybrid edge-cloud systems, where intelligence is distributed across the network to support faster response cycles and more resilient operations in complex production environments.

Going from Experimental to Essential

The shift toward decentralized AI is reshaping industrial architecture by moving computation closer to machines, sensors, and production systems, reducing reliance on centralized cloud infrastructure for time-critical decisions. This transition is driven by advances in machine learning systems that enable efficient on-device and edge deployment, helping to resolve the long-standing trade-off between computational intensity and latency in real-time industrial environments. Research in edge and distributed inference consistently shows that localized processing is essential for applications requiring ultra-low latency and deterministic performance, particularly in industrial automation and distributed sensing systems, where communication delays can degrade system reliability. In these contexts, edge computing is increasingly used to support real-time decision-making directly at the source of data generation, ensuring operational continuity even under intermittent or degraded connectivity. This architectural shift also reflects a broader move toward hybrid edge-cloud systems, where immediate control and inference occur locally while heavier model training and aggregation are handled centrally, enabling both scalability and operational resilience across industrial networks.

Local Inference: A Pillar of Operational Performance

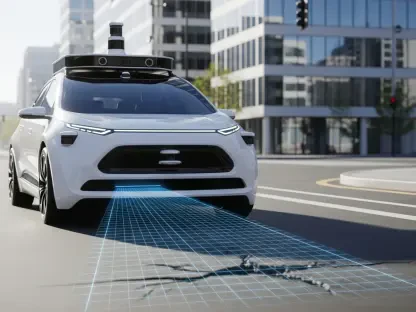

The deployment of edge AI in industrial environments is increasingly reshaping real-time production flow management by enabling inference directly within operational equipment rather than relying on centralized processing. Embedded AI accelerators and specialized processors integrated into industrial systems enable machine vision models to perform high-speed quality inspection on production lines, supporting defect detection and classification during active manufacturing. This localized analysis enables immediate decision-making at the point of production, allowing non-conforming items to be identified and removed without interrupting downstream operations, thereby reducing scrap rates and improving material efficiency. Research consistently highlights that the strategic value of industrial data is shifting from ownership and collection toward the speed and locality of inference, where value is created through rapid conversion of sensor data into operational action. As a result, real-time responsiveness at the edge is increasingly recognized as a key factor in improving factory agility by reducing latency and avoiding bottlenecks associated with centralized processing architectures.

Reimagining Predictive Maintenance

Predictive maintenance in industrial environments is increasingly leveraging edge-based analytics, where vibration and acoustic signals are processed directly at or near the asset rather than transmitted to centralized systems. This approach enables condition monitoring models to operate closer to the source of data generation, improving responsiveness in identifying anomalies associated with mechanical degradation. By analyzing high-frequency sensor data locally, industrial systems reduce reliance on continuous data streaming to cloud infrastructure, thereby limiting bandwidth consumption and improving operational efficiency in distributed environments. As noted in a 2025 IEEE Computer Society analysis of industrial AI and edge computing, deploying models on gateway devices enables low-latency inference that detects problems immediately while operating in network-separated areas, directly supporting improved resilience when external connectivity is limited or disrupted.

Agentic AI: Toward a New Era of Human-Machine Collaboration

The evolution of agentic AI in industrial environments reflects a gradual transition from static automation toward systems capable of orchestrating multi-step workflows, optimizing operations, and assisting decision-making within clearly defined operational boundaries. In modern manufacturing and robotics environments, AI systems are increasingly embedded into production workflows to support coordination, scheduling optimization, and real-time performance monitoring rather than operating as fully independent agents. A September 2025 McKinsey analysis of agentic AI deployments across advanced industries found that organizations achieving the most from agent deployments almost universally emphasize « human-on-the-loop » governance, oversight frameworks in which humans manage, supervise, validate, and intervene when necessary, while also actively managing risks arising from hallucinations, decision boundaries, and cybersecurity threats. As a result, AI functions primarily as an augmentation layer that enhances operational efficiency and situational awareness rather than replacing human decision authority.

Bridging the Skills Gap: Governance, Training, and Cultural Adoption

This transformation, nevertheless, requires strict governance and deep upskilling of technical teams. Understanding inference models and the ability to interpret decisions made by edge systems are becoming essential competencies for maintenance engineers. As a May 2025 Sphere technology advisory research report on Edge AI strategy makes clear, companies must invest in training and change management as part of their edge deployment, including upskilling traditional operations teams in AI and data interpretation, and establishing new cross-functional teams that bridge IT and OT (operational technology). The success of Edge AI integration depends as much on the quality of the algorithms as on the workforce enablement that surrounds them.

Conclusion: Local Intelligence as a Lasting Competitive Advantage

Edge AI is becoming a foundational layer in modern industrial systems, enabling real-time intelligence directly at the point of production. By shifting computation closer to machines and sensors, industries reduce latency, improve resilience, and support faster operational decision-making across manufacturing environments.

This distributed model is reshaping key use cases such as predictive maintenance, quality inspection, and production optimization, where immediate inference is critical to efficiency and safety. Hybrid edge-cloud architectures are now the dominant design, combining local responsiveness with centralized training and coordination. At the same time, agentic AI is expanding the role of automation into workflow orchestration and decision support, while keeping humans in supervisory and exception-handling roles.

Successful adoption depends not only on technology deployment but also on governance, workforce upskilling, and organizational readiness. Overall, Edge AI is redefining industrial performance around speed of inference and action, making real-time intelligence a core driver of competitiveness in manufacturing systems.